My personal proposal to you is: try to update your splunk to current one as soon as possible. Here is Splunk's information about this vulnerability: BUT as your version is out of support, I'm not sure if Splunk has verified that for your version (probably not). The current knowledge is the core splunk is used log4j only for DFS and if that is not in use then there shouldn't be an issue. You must contact to Splunk support and ask if they could give those to you.

Unfortunately you need some middle versions to reach this target level, which are not available from any more. Oldest supported version is currently 8.1.x. You should plan to update it to supported versions as soon as possible. Once you deploy the application to MuleSoft Anypoint Platform CloudHub and disable CloudHub logs, it will use the log4j2 configuration which we have createdįeel free to drop your questions in the comments section on MuleSoft Splunk Integration.First your splunk installation is quite old and out of support. The next step is to add CloudHub log appenders to the log4j2.xml file in the mule application.Īn example log4j2 file with a custom cloudhub appender. Once that is done, you will have an option to disable logs “Disable Application Logs” at runtime while deploying the applicationĢ. Raise a support ticket with MuleSoft to disable CloudHub application logs. Below are the few steps that need to be followed.ġ. To use our logging, we need to make specific changes to the log4j file so that we can override the default log4j configuration for CloudHub. CloudHub uses its default logging mechanism. Sending MuleSoft Anypoint Platform (CloudHub) logs will require a slightly different process. Pushing MuleSoft Anypoint Platform logs to Splunk On selecting that, you can see the logs being pushed to Splunk After that, click on “Data Summary” and click on “Source Types” and search for Log4j ( see Step 3), and select log4j.ġ0. To check that, click on the “Search and Monitoring” option.ĩ. Once you start the application, you can see logs flowing to Splunk. Here is a snippet for the application that will be sending logs to SplunkĨ. For this purpose, we can either use JSON logs or add log information in JSON format. For better log analysis and monitoring, it is recommended to use JSON logs. We can also add SSL if the URL is HTTPS.ħ. Add the following snippet in the log4j2.xml in the mule application. You can also enable SSL for this token and set the port. Once you have created the token, make sure to enable the token by going to global settings. Next Steps will involve configuring the HTTP Appender in the log4j file to connect to Splunkĥ. The token value will be used to connect to Splunk from the log4j file in the MuleSoft application. Complete all the steps, and you will get the token value. After Clicking on New Token, Click on HTTP Event Collector and add log4j as the source, since we will be sending logs from log4j to SplunkĤ.

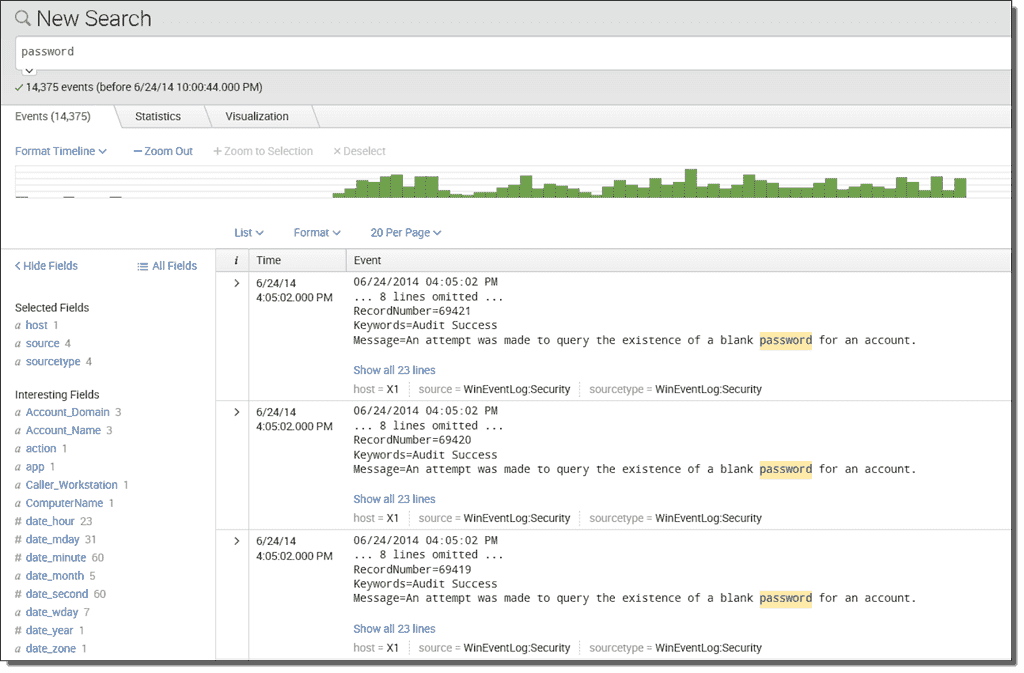

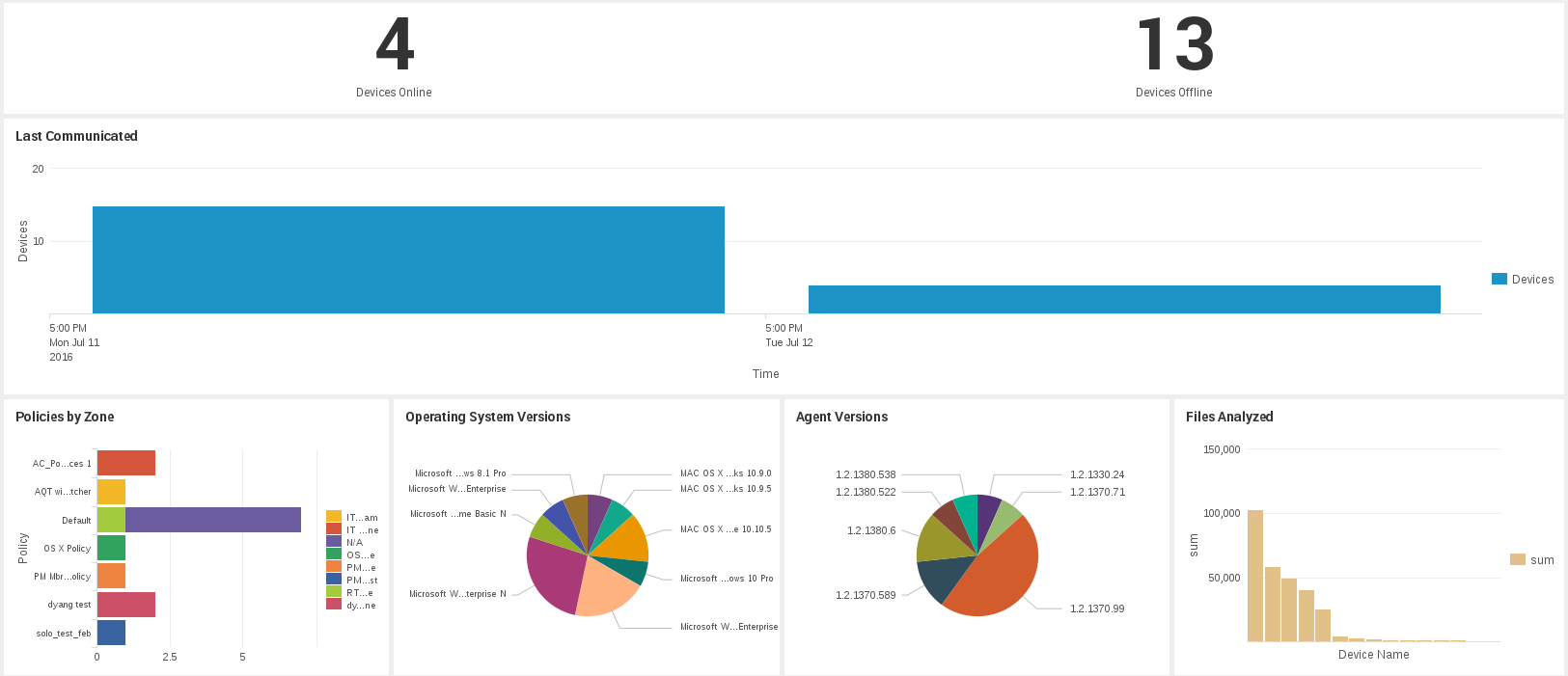

Go to Settings > Data > Data Inputs > New Tokenģ. Logging to Splunk can be enabled on Cloud Hub and On-Premise.įirst things first, we need to create a token in Splunk.Ģ. Required data How to use Splunk software for this use case Next steps A serious vulnerability (CVE-2021-44228) in the popular open source Apache Log4j logging library poses a threat to thousands of applications and third-party services that leverage this library, allowing attackers to execute arbitrary code from an external source. Today we will be using Splunk as an external logging tool and integrating it with MuleSoft using Log4j2 HTTP appender to send mule application logs to Splunk. The blog typically talks MuleSoft Splunk Integrationįor a robust logging mechanism, it is essential to have an external log analytic tool to further monitor the application. Although CloudHub has a limitation of 100 MB of logs or 30 days of logs. MuleSoft provides its logging mechanism for storing application logs. Some external logging tools include ELK and Splunk Logging must be consistent, reliable so we can use that information for discovering relevant data. Logging is an essential part of monitoring and troubleshooting issues and any production errors or visualizing the data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed